Why Is JRuby Slack?

14 minute read

Updated:

Adam Gordon Bell

Lately, I made some contributions to the continuous integration assignment for Jekyll. Jekyll is a static region generator created by GitHub and written in Ruby, and it makes spend of Earthly and GitHub Actions to envision that it works with Ruby 2.5, 2.7, 3.0, and JRuby.

The originate times looked admire this:

| 2.5 | 8m 31s |

| 2.7 | 8m 33s |

| 3.0 | 7m 47s |

| JRuby | 45m 16s |

The Jekyll CI does hundreds things in it that a straightforward Jekyll region originate obtained’t but clearly JRuby was as soon as slowing the total assignment down by a well-known amount, and this bowled over me: Wasn’t the total level of the spend of JRuby, and its contemporary brother TruffleRuby, bustle? Why was as soon as JRuby so boring?

Even constructing this weblog and the spend of your total options I’ve came at some level of, the efficiency of Ruby on the JVM level-headed seems to be to be admire this:

| MRI Ruby 2.7.0 | 2.64 seconds |

| Fastest Ruby on JVM Assemble | 25.7 seconds |

So why is Jruby boring in these examples? It turns out that the solution is refined.

What’s JRuby?

I was as soon as very pleased to view the JRuby accomplishing, my favorite programming language running on what’s potentially the finest digital machine within the sector. – Peter Lind

JRuby is an different Ruby interpreter that runs on the Java Digital Machine (JVM). MRI Ruby, in total identified as CRuby, is written in C and is the long-established interpreter and runtime for Ruby.

Set up JRuby

On my mac e book, I will be able to swap from the MRI Ruby to JRuby admire this.

Set up rbenv:

brew install rbenvList that that you would possibly per chance perhaps per chance be also take into consideration install alternatives:

rbenv install -l Set up:

rbenv install jruby-9.2.16.0Home a particular accomplishing to spend JRuby:

rbenv native jruby-9.2.16.0Why draw folks spend JRuby?

OMG #JRuby +Java.util.concurrent FTW! Doing a recursive backtrace by billion+, I’ve made it 30,000x sooner than 1.9.3. 30 THOUSAND.

— /dave/null (@bokmann) September 21, 2013

There are several causes folks would possibly per chance perhaps utilize JRuby, several of which indulge in to attract with efficiency.

Getting previous the GIL

MRI Ruby, necessary admire Python, has a world interpreter lock. This means that though that you would possibly per chance perhaps even indulge in many threads in a single Ruby assignment, handiest one will ever be running at a time. Should you glance at many of the benchmark shoutout results, parallel multi-core solutions dominate. JRuby lets you sidestep the GIL as a bottleneck, on the cost of attending to wretchedness about writing thread-stable code.

Library Salvage entry to and Ambiance Salvage entry to

A total driver for JRuby utilization is the need for a Java-basically basically based entirely library or the indulge in to focal level on the JVM. That you’ll be attempting to write an Android app or a Swing app the spend of JRuby, or presumably you already indulge in an present Ruby codebase but need it to bustle on the JVM. My 2 cents is that whenever you happen to launch from scratch and indulge in to focal level on the JVM, JRuby would possibly per chance perhaps level-headed no longer be the principle probability you maintain in options. Should you draw utilize JRuby, be warned that that you would possibly per chance perhaps want a true web of Java, the JVM, and Ruby: whenever you happen to’re coming to the JVM for java libraries and functionality, then JRuby obtained’t attach you from having to read Java.

Lengthy-Working Course of Efficiency

MRI Ruby is identified to be boring, as when in contrast with the JVM or even Node.js. In response to The Pc Language Benchmarks Sport, it’s in total 5-10x slower than a identical Java resolution. Diminutive efficiency benchmarks are in total no longer the finest device to evaluate purposeful efficiency, but one feature the JVM is identified to construct very successfully when in contrast with interpreted languages is in long-running server functions, the attach adaptive optimzations can originate a expansive distinction.

Why is my JRuby Program Slack?

The JVM would possibly per chance perhaps per chance also be hasty at running Java in benchmark video games, but that doesn’t necessarily lift over to JRuby. The JVM makes diversified efficiency alternate-offs than MRI Ruby. Critically, an untuned JVM assignment has a boring launch-up time, and with JRuby, this can procure even worse as hundreds long-established library code is loaded on launch-up. The JVM starts by working as a byte code interpreter and compiles “sizzling” code as it goes but in a huge Ruby accomplishing, with hundreds gemstones, the overhead of JITing your total Ruby code to bytecode can lead to a severely slower launch-up time.

Should that you would possibly per chance perhaps per chance also be the spend of JRuby on the mutter line or starting hundreds short-lived JRuby processes, then it is seemingly that JRuby shall be slower than MRI Ruby. On the opposite hand, the JVM is widely tunable, and it’s that that you would possibly per chance perhaps per chance be also take into consideration to tune things to behave extra admire long-established Ruby. Should that you would possibly per chance perhaps per chance admire your JRuby to behave extra admire MRI Ruby, you in all likelihood desire to position the --dev flag. Both admire this:

ENV JRUBY_OPTS="--dev"OR

jruby --dev file.rbIn my Jekyll spend case, this trade and one other puny JVM parameter tweaking made a mountainous distinction. I was as soon as ready to procure the originate time down from 45m 16s to 24m 1s.

| JRuby | 45m 16s |

| JRuby –dev | 24m 1s |

--dev gets us closer to MRI RubyInterior --dev

The --dev flag signifies to JRuby that that you would possibly per chance perhaps per chance also be running it in as a developer and would grab like a flash startup time over absolute efficiency. JRuby, in flip, tells the JVM handiest draw a single level jit (-J-XX:TieredStopAtLevel=1) and to no longer wretchedness about verifying the bytecode (-J-Xverify:none). Extra puny print on the flag can came at some level of right here.

Why is my JRuby Program Irascible?

Ruby’s built-in kinds were built with the GIL in options and are no longer thread-stable on the JVM. Should you switch the JRuby to sidestep the GIL, utilize into myth that that you would possibly per chance perhaps per chance also be introducing threading bugs. Should you procure unexpected or non-deterministic finally ends up in your concurrent array utilization, that you would possibly per chance perhaps level-headed glance at concurrent files constructions for the JVM admire ConcurrentHashMap or ConcurrentSkipListMap. That you would possibly per chance perhaps earn that they no longer handiest repair the threading factors but shall be orders of magnitude sooner than the idiomatic Ruby device. Jekyll is rarely any longer multi-threaded, then over again, so right here is rarely any longer an hassle I needed to wretchedness about.

What’s TruffleRuby?

GraalVM is a JVM with diversified targets than the long-established Java digital machine.

In response to Wikipedia, these targets are:

- To enhance the efficiency of Java digital machine-basically basically based entirely languages to envision the efficiency of native languages.

- To nick the launch-up time of JVM-basically basically based entirely functions by compiling them forward-of-time with GraalVM Native Snarl technology.

- To enable freeform mixing of code from any programming language in a single program.

Increased efficiency and better launch-up time sound exactly admire what we indulge in to enhance on JRuby, and this truth didn’t move unnoticed: TruffleRuby is a fork of JRuby that runs on GraalVM. Because GraalVM helps each forward of time compilation and JIT, it’s that that you would possibly per chance perhaps per chance be also take into consideration to optimize both for prime efficiency of a long-running provider or for launch-up time, which is priceless for shorter running mutter-line apps admire Jekyll.

TruffleRuby explains the alternate-offs of AOT vs. JIT admire this:

| Time to launch TruffleRuby | about as hasty as MRI launch-up | slower |

| Time to succeed in high efficiency | sooner | slower |

| Height efficiency (also pondering GC) | true | simplest |

| Java host interoperability | wants reflection configuration | true works |

Set up TruffleRuby

Set up rbenv:

brew install rbenvList that that you would possibly per chance perhaps per chance be also take into consideration install alternatives:

rbenv install -l Set up:

rbenv install truffleruby+graalvm-21.0.0

rbenv native truffleruby+graalvm-21.0.0 ruby --version

truffleruby 21.0.0, admire ruby 2.7.2, GraalVM CE Native [x86_64-darwin]Home mode to --native

ENV TRUFFLERUBYOPT='--native'Efficiency Shoot-out

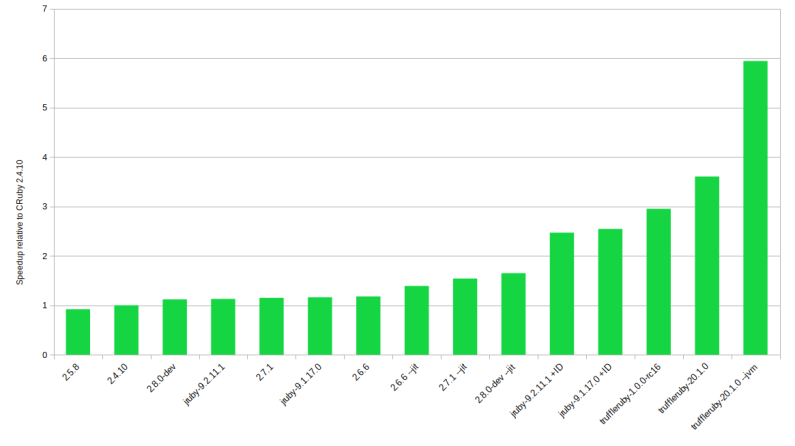

TruffleRuby is severely better in CPU heavy efficiency tests than JRuby, whose efficiency is severely better than MRI Ruby. PragToby has a mountainous breakdown:

On the opposite hand, in my checking out with Jekyll and the Jekyll CI pipeline, JRuby and TruffleRuby are severely slower than the spend of MRI Ruby. How can this be?

I own there are two causes for this:

- Exact-World initiatives admire Jekyll involve necessary extra code, and JITing that code has a excessive launch-up imprint.

- Exact-world code admire Jekyll or Rails is optimized for MRI Ruby, and loads of those optimizations don’t help or actively hinder the JVM.

Failure Of Fork

The most apparent feature the attach you glance this distinction is multi-assignment Ruby programs. The GIL is rarely any longer an hassle at some level of processes and the comparatively hasty launch time of MRI Ruby is an profit when forking a brand contemporary assignment. On the opposite hand, JVM Programs are in total written in a multi-threading model the attach code handiest need to be JIT’d as soon as, and the launch-up imprint is shared at some level of threads. And basically, whenever you happen to ignore language shoot-out video games, the attach every little thing is a single assignment and as a change review an MRI multi-assignment device to a TruffleRuby multi-threading device, many advantages of the JVM appear to depart.

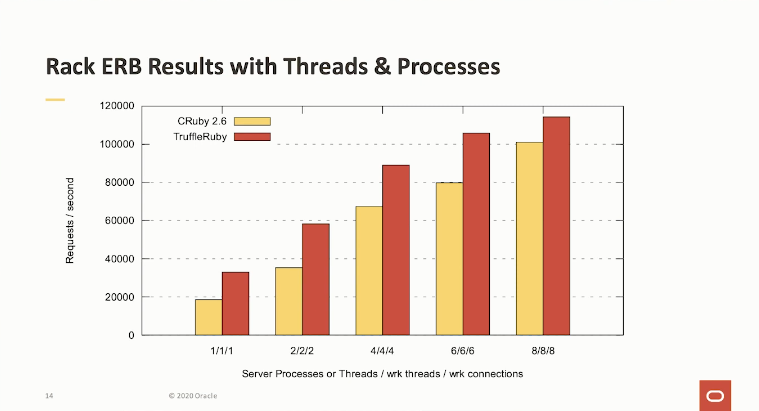

This chart comes from Benoit Daloze1, the TruffleRuby lead. The benchmark in quiz is a long-running server-side application the spend of a minimal internet framework. It is within the candy space of the Graal and TruffleRuby, with minute code to JIT and masses time to originate up for a boring launch. But even so, MRIRuby does successfully.

Which brings me reduction to my contemporary quiz: Why is JRuby boring for Jekyll? I draw no longer glance identical times but severely slower times. Jekyll is rarely any longer forking processes, in order that is rarely any longer the insist. Hugo, the static region builder for Rush, is signifcantly sooner than Jekyll. So all people knows that Jekyll is rarely any longer on the limits of hardware the attach there would possibly per chance be merely no extra efficiency to squeeze out.

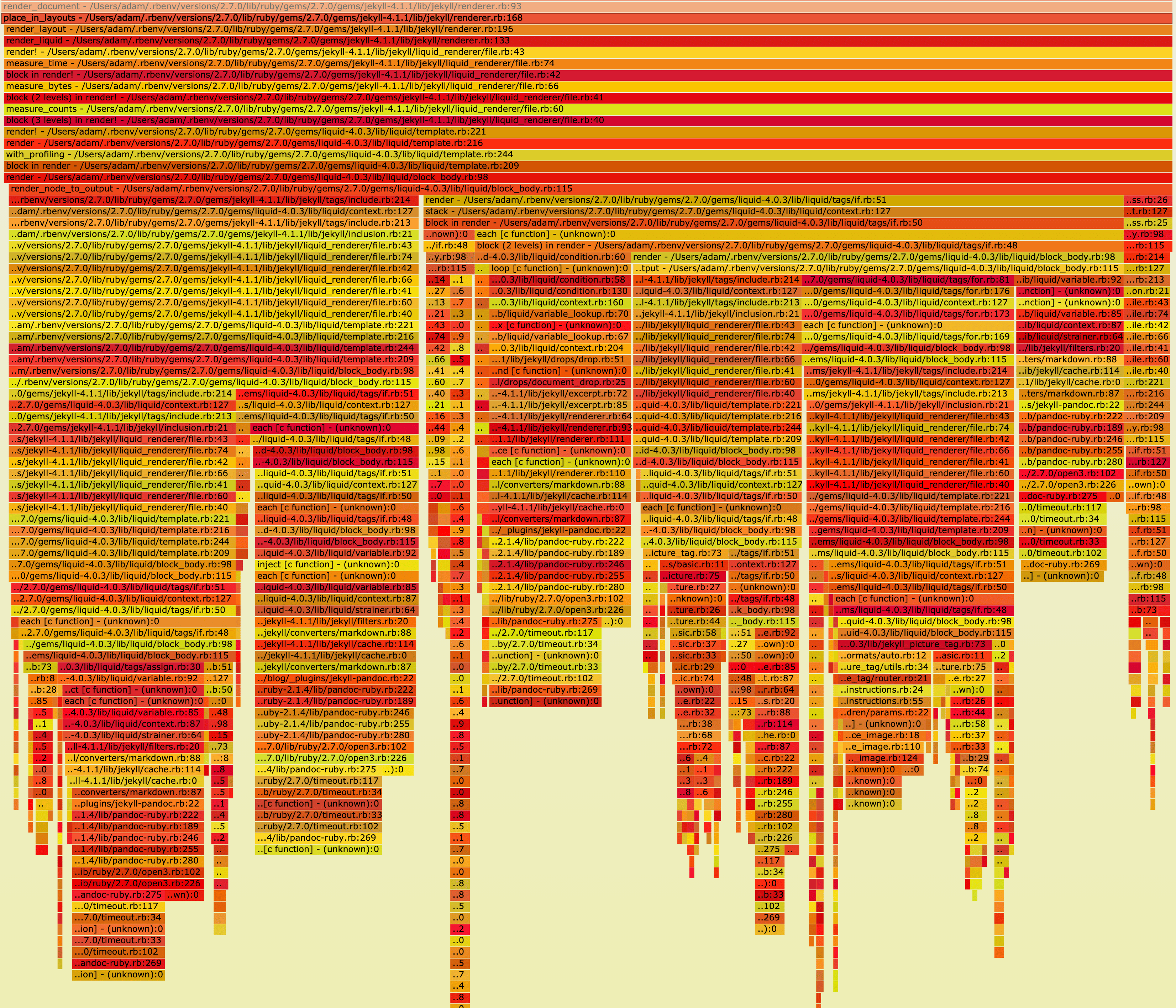

Test with RubySpy

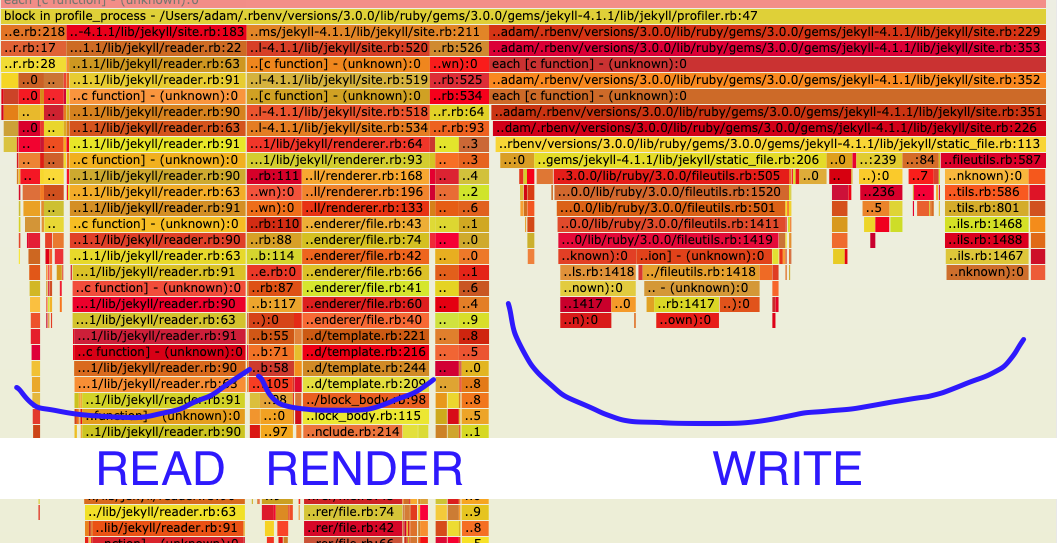

To dig into this, let’s utilize a glance at a flame-graph of the Jekyll originate for this weblog the spend of RubySpy:

| MRI Ruby 2.7.0 | 2.64 seconds |

| TruffleRuby-dev | 25.7 seconds |

Jekyll Test 1

sudo RUBYOPT='-W0' rbspy file -- bundle exec jekyll originate --profile

What we glance is that 50% of the wall time was as soon as spent in writing files:

# Write static files, pages, and posts.

#

# Returns nothing.

def write

each_site_file draw |merchandise|

merchandise.write(dest) if regenerator.regenerate?(merchandise)

quit

regenerator.write_metadata

Jekyll:: Hooks.trigger :region, :post_write, self

quitAnd 16% of time was as soon as spent studying files.

# Read Situation files from disk and load it into inner files constructions.

#

# Returns nothing.

def read

reader.read

limit_posts!

Jekyll:: Hooks.trigger :region, :post_read, self

quitTotal handiest 22% of the time was as soon as spent doing the actual work of generating HTML:

# Render the positioning to the move back and forth space.

#

# Returns nothing.

def render

relative_permalinks_are_deprecated

payload = site_payload

Jekyll:: Hooks.trigger :region, :pre_render, self, payload

render_docs(payload)

render_pages(payload)

Jekyll:: Hooks.trigger :region, :post_render, self, payload

quitIn other phrases, your total time is spent studying to and from the disk. Clearly, the hugo case reveals us this would possibly per chance perhaps be sooner: we aren’t hitting a hardware restrict. But why does this bustle even slower in JRuby and TruffleRuby than it does in MRI Ruby? Let’s are attempting one other test.

Jekyll Test 2

Attempting out on the originate assignment for one other Jekyll region affords identical results timings: TruffleRuby is severely slower.

| MRI Ruby 2.7.0 | 20 seconds |

| TruffleRuby-dev | 116 seconds |

This time the flamegraph reveals most time is spent with rendering liquid templates fairly than IO. I wasn’t ready to establish out a means to procure a flamegraph out of TruffleRuby.

So what does this imply? My guess is that the filesystem Ruby code or the liquid templates draw no longer make the most of being on the JVM. On the contrary, they give the impression of being to bustle slower.

It would possibly per chance perhaps per chance also be that that you would possibly per chance perhaps per chance be also take into consideration to reimplement write and read to spend JVM excessive-efficiency file procure entry to simplest practices, and it shall be that that you would possibly per chance perhaps per chance be also take into consideration to reimplement liquid templates in a Java native device. That ought to bring a bustle-up, but I’m no longer certain if that can originate JRuby sooner than MRI Ruby for Jekyll or handiest bring it as much as a identical efficiency.

Efficiency Recommendation

All this leaves me with the most generic efficiency advice: That you would possibly per chance perhaps level-headed test your Ruby codebase with diversified runtimes and glance what works simplest for you.

If your code is long-running, CPU certain, and thread-basically basically based entirely, and if the GIL limits you, TruffleRuby it will seemingly be a select. Furthermore, if that is the case and you tweak your code to spend Java concurrent files constructions in feature of Ruby defaults, that you would possibly per chance perhaps potentially cease an expose of magnitude bustle-up. If the rubbish collector is a bottleneck in your app, that would possibly per chance perhaps per chance also be one other cause on the reduction of attempting out a remark runtime.

On the opposite hand, in case your present ruby codebase is rarely any longer CPU certain and no longer multi-threaded, this would possibly per chance perhaps potentially bustle slower on JRuby and Truffle Ruby than with the MRIRuby runtime.

Furthermore, I shall be defective. If I uncared for something crucial, then I’d admire to listen to from you. Here at Earthly we utilize originate efficiency very severely, so whenever you happen to can even indulge in got further options for speeding up Ruby or Jekyll, I’d admire to listen to them.2

Updates

Update #1

Every @ChrisGSeaton, the creator of TruffleRuby and @headius, who works on JRuby indulge in answered on reddit with options and requests for reproduction steps. I’m going to position collectively an instance repo to portion.

Update #2

The order the attach JRuby and TruffleRuby shine are long running processes which indulge in had time to heat up. In response to options I set collectively a repo of a straightforward puny Jekyll originate being built 20 times by the identical assignment in a repo right here. After 20 builds with the identical running assignment the originate times draw launch to converge, but even after that MRI Ruby is level-headed fastest.

Update #3

I surely indulge in filed a worm with Truffle Ruby and obtained some efficiency advice that I own is price sharing right here:

Some Solutions on The Assumptions of The Article

Hello there, right here are some notes on the weblog submit.

“TruffleRuby is a fork of JRuby”

Technically beautiful from a repository level of sight, and that’s what the README says (I’ll update that), but in apply it’s admire >90% of code is rarely any longer from JRuby. It’s somewhat diversified technologies.

“Hugo, the static region builder for Rush, is severely sooner than Jekyll. So all people knows that Jekyll is rarely any longer on the limits of hardware the attach there would possibly per chance be merely no extra efficiency to squeeze out.”

I’d bet that’s in share ensuing from a remark form. Doubtless Hugo is better optimized and would possibly per chance perhaps draw necessary much less work ensuing from diversified constraints.

“On the opposite hand, in case your present ruby codebase is rarely any longer CPU certain and no longer multi-threaded, this would possibly per chance perhaps potentially bustle slower on JRuby and TruffleRuby than with the MRI Ruby runtime.”

I own there would possibly per chance be not any such thing as a such straightforward rule and also there would possibly per chance be the quiz of “no longer CPU certain” is how necessary time spent within the kernel. TruffleRuby will also be sooner on many Ruby workloads, so long as there would possibly per chance be Ruby code to bustle, there would possibly per chance be doable for optimization. For certain if 90% is spent in read/write intention calls, handiest 10% of it’ll also be optimized by a Ruby implementation, but I would query that’s elegant rare.

The well-known ingredient I own is if it’s no longer nearly entirely IO-certain, then there would possibly per chance be doable to plug up. And the most helpful device to know clearly is to utilize a glance at it, as you roar.

“I’d particularly admire to listen to the actual device to procure a flame graph out of TruffleRuby”

Thank you for the insist at https://github.com/oracle/truffleruby/factors/2363. On the moment TruffleRuby has a total lot of Ruby-level profilers (–cpusampler, VisualVM, Chrome Inspector). We’re working on having a straightforward device to procure a flamegraph (beautiful now we spend this but one wants to clone the truffleruby repo which is much less helpful). Java-level profiling will almost definitely be that that you would possibly per chance perhaps per chance be also take into consideration by VisualVM. async-profiler wants something admire JDK>=15 to work correctly with Graal compilations IIRC.

Some Recommendation for Making Ruby Single Course of

Your simplest solution for every JRuby and TruffleRuby would possibly per chance perhaps per chance be to position it as much as spend a single assignment to attract every little thing. In JRuby, it is trivial to launch up a separate, isolated JRuby instance true by the identical assignment:

my_jruby = org.jruby.Ruby.new_instance my_jruby.eval_script(ruby_code)The code given will bustle within the identical assignment but a entirely diversified JRuby environment. This would possibly per chance perhaps per chance also be adapted to bustle your “subprocesses” with out starting a contemporary JVM every time.

TruffleRuby seemingly has something identical in step with GraalVM polyglot APIs.

Should you managed to procure your CI bustle to spend a single assignment, I could per chance perhaps per chance be bowled over if it was as soon as no longer as hasty or sooner than long-established C-basically basically based entirely Ruby.

Advise: The article does duvet --dev and I did set collectively a single assignment instance in update 2. The outcomes are device better, but level-headed slower.

Using Application Class Data Sharing

AppCDS is a means to severely increase startup bustle with out impacting total runtime efficiency. It’d be appealing to glance if employing that helps with efficiency. https://medium.com/@toparvion/appcds-for-spring-boot-functions-first-contact-6216db6a4194

At closing, whenever you happen to would possibly per chance perhaps, I’d be interested to glance what happens whenever you happen to work from a ramdisk fairly than the HD. I feel about the IO problems you were seemingly seeing is thanks to loads of reduction and forth dialog between the app and the disk, what happens whenever you happen to execute that off?

The feedback from the JRuby and TruffleRuby folks has been unbelievable. They’ve been providing options and asking for tickets and reproduction steps. These are fearless initiatives that reduction bettering and I’m excited to listen to that making Jekyll bustle sooner than it does on CRuby is terribly that that you would possibly per chance perhaps per chance be also take into consideration with some further elbow grease.